Articles

Articles

From our blog.

SEO & AEO Mar 13, 2026

What is AEO - and why should you care?

5 min READ

SEO & AEO Mar 13, 2026

What is GEO - and how does it fit with AEO?

4 min READ

SEO & AEO Feb 22, 2026

Simple Image Optimiser: Optimise images for SEO without Photoshop.

8 min READ

AI Transformation Feb 18, 2026

AI automation does yoga in Crouch End.

7 min READ

Website Design Feb 15, 2026

WordPress vs Astro: Why we switched after 15 years.

9 min READ

24 Hour Website Jan 28, 2026

Fast Website Launch - Why Wait Weeks?

5 min READ

AI Transformation Dec 14, 2025

What I learned building my first AI email automation.

6 min READ

AI Transformation Dec 7, 2025

AI blog post writing and the mistake that tripled my traffic.

8 min READ

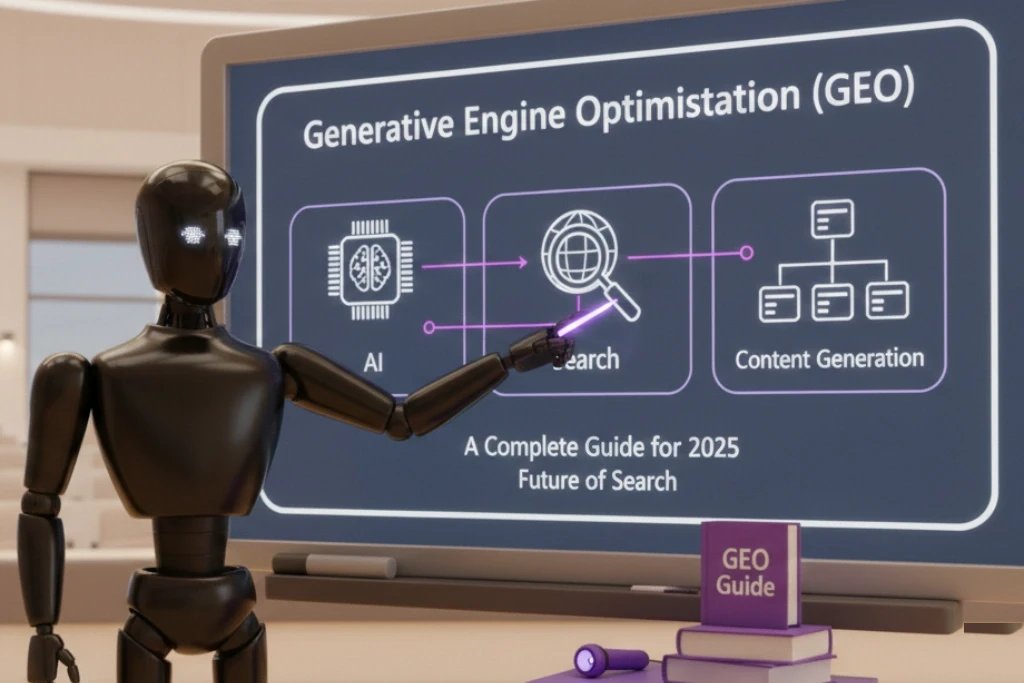

SEO & AEO Nov 4, 2025

Generative Engine Optimisation (GEO): A practical guide for 2026.

12 min READ

SEO & AEO Oct 15, 2025

Crawl errors 2025: What they are & why they matter.

10 min READ

Newsletter

Get new articles in your inbox.

Our latest musings on web design, AI, and SEO - straight to your inbox. No spam.